本文记录我实验豆包大模型录音文件识别带上下文识别功能

本文内容有 AI 参与编写成分

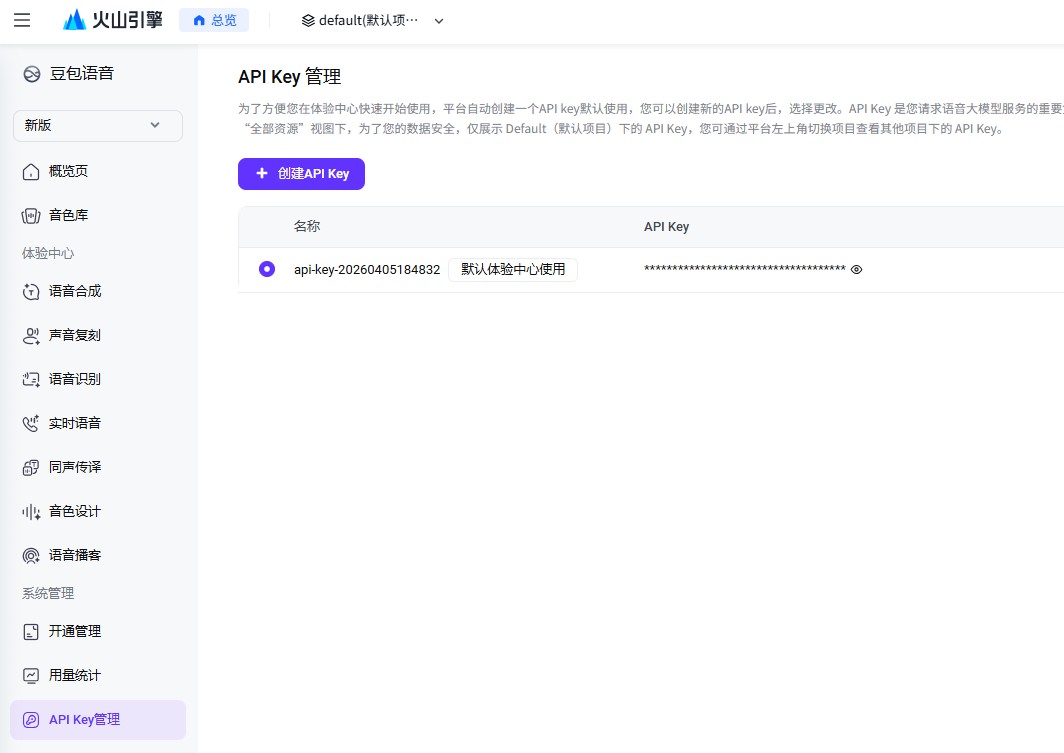

我这里使用的是豆包 ASR 新版控制台,地址是: https://console.volcengine.com/speech/new/experience/asr

使用新版控制台对接比较简单,只需要一个 X-Api-Key 即可

进入控制台开通「语音服务」的大模型ASR能力,拿到你的API Key记录起来。我这里存放到 C:\lindexi\Work\Speech.txt 文件里面

目前豆包提供两个版本的录音文件识别模型可选:

- 1.0版本:

volc.bigasr.auc - 2.0版本:

volc.seedasr.auc(本次实验用的是2.0版本,识别准确率更高)

首先读取API Key,初始化HttpClient并配置公共请求头:

var keyFile = @"C:\lindexi\Work\Speech.txt";var key = File.ReadAllText(keyFile).Trim();

using var httpClient = new HttpClient();httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Key", key);// 替换成你要使用的模型IDvar resourceId = "volc.seedasr.auc";httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Resource-Id", resourceId);

var requestId = Guid.NewGuid().ToString();httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Request-Id", requestId);// 发包序号固定为-1即可httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Sequence", "-1");你需要将上文代码中的文件路径换成你自己的API Key存储路径,也可以直接写配置、用环境变量等方式读取Key,相信这一步难不倒你。

接下来构造识别任务提交请求,核心是上下文热词配置,我们可以把业务里的专有名词、特定术语放在上下文里,大模型识别时会优先匹配这些内容:

var url = "https://openspeech.bytedance.com/api/v3/auc/bigmodel/submit";

// 构造上下文提示词,这里示例是告诉大模型当前设备安装的软件列表,识别语音的时候会优先匹配列表里的名称var corpusContext = new CorpusContext(){ ContextData = { new CorpusContextData() { Text = "你是一个应用软件使用助手,你可以调用工具帮助用户实现电脑的操作", }, new CorpusContextData() { Text = "现在电脑上安装的程序有:哔哩哔哩直播姬、软媒魔方、腾讯课堂、微软OfficePLUS、西娃白板、向日葵、小狼毫输入法、QQ影音。请选择将打开的应用程序", }, }};

var corpusContextJson = JsonSerializer.Serialize(corpusContext, new JsonSerializerOptions(){ WriteIndented = true, Encoder = JavaScriptEncoder.UnsafeRelaxedJsonEscaping,});var asrRequest = new AsrRequest(){ User = new UserMeta() { Uid = "abc" }, Audio = new AudioMeta() { // 测试用的录音,内容是用户说“打开希沃白板”,可替换成你自己的音频地址 Url = "https://pro-en-ali-pub.en5static.com/easinote5_public/uwixkwvzhhqjjhnohwvyzzwnykhhihhh.mp3", Format = "mp3", }, Request = new RequestMeta() { ModelName = "bigmodel", Corpus = new CorpusMeta() { Context = corpusContextJson, } }};

var jsonString = JsonSerializer.Serialize(asrRequest, new JsonSerializerOptions(){ Encoder = JavaScriptEncoder.UnsafeRelaxedJsonEscaping,});

// 提交识别任务using var httpResponseMessage = await httpClient.PostAsync(url, new StringContent(jsonString, Encoding.UTF8, "application/json"));var responseText = await httpResponseMessage.Content.ReadAsStringAsync(); // 提交成功返回空内容上面用到的CorpusContext、AsrRequest等实体类可以直接参考火山引擎语音服务的文档定义,详细定义的类型代码可到本文末尾找到本文的所有代码下载方法获取到

提交任务后需要轮询查询接口拉取识别结果:

var queryUrl = "https://openspeech.bytedance.com/api/v3/auc/bigmodel/query";

while (true){ try { using var queryHttpResponseMessage = await httpClient.PostAsync(queryUrl, new StringContent("{}", Encoding.UTF8, "application/json")); var queryHttpResponseText = await queryHttpResponseMessage.Content.ReadAsStringAsync();

string statusCodeText = string.Empty; if (queryHttpResponseMessage.Headers.TryGetValues("X-Api-Status-Code", out var statusCode) && statusCode is string[] { Length: > 0 } codeArray) { statusCodeText = codeArray[0]; }

Console.WriteLine($"[{statusCodeText}] {queryHttpResponseText}");

var speechRecognitionResponse = JsonSerializer.Deserialize<SpeechRecognitionResponse>(queryHttpResponseText); // 识别完成拿到结果就退出轮询 if (!string.IsNullOrEmpty(speechRecognitionResponse?.Result?.Text)) { break; } } catch (Exception e) { Console.WriteLine(e); }}我用的测试录音是录音有两个,分别如下:

- 打开西瓜白板录音.mp3: https://pro-en-ali-pub.en5static.com/easinote5_public/uwixjonmhhqjjhnohwvvwyyhvzphihhh.mp3

- 会被识别为 “打开西瓜白榜”,但可以提示正为 “西瓜白板”,但就是很难被正确识别为 “希沃白板”

- 打开西wa白板录音.mp3: https://pro-en-ali-pub.en5static.com/easinote5_public/uwixkwvzhhqjjhnohwvyzzwnykhhihhh.mp3

- 可用提示词 “西娃白板” 或 “西喔白板” 来进行掰歪,但也可以用正确的 “希沃白板”定正

对比结果如下:

采用 打开西wa白板录音.mp3 时, 没有加上下文的时候,识别结果完全跑偏:

{"audio_info":{"duration":4223},"result":{"additions":{"duration":"4223"},"text":"打开西瓜白榜。","utterances":[{"end_time":2890,"start_time":1450,"text":"打开西瓜白榜。","words":[{"confidence":0,"end_time":1690,"start_time":1450,"text":"打"},{"confidence":0,"end_time":1890,"start_time":1690,"text":"开"},{"confidence":0,"end_time":2170,"start_time":1930,"text":"西"},{"confidence":0,"end_time":2410,"start_time":2170,"text":"瓜"},{"confidence":0,"end_time":2610,"start_time":2570,"text":"白"},{"confidence":0,"end_time":2890,"start_time":2850,"text":"榜"}]}]}}加了包含「西娃白板」的上下文之后,识别结果完全匹配提示内容:

[20000000] {"audio_info":{"duration":3455},"result":{"additions":{"duration":"3455"},"text":"打开西娃白板。","utterances":[{"end_time":2470,"start_time":1150,"text":"打开西娃白板。","words":[{"confidence":0,"end_time":1390,"start_time":1150,"text":"打"},{"confidence":0,"end_time":1550,"start_time":1390,"text":"开"},{"confidence":0,"end_time":1750,"start_time":1710,"text":"西"},{"confidence":0,"end_time":1950,"start_time":1910,"text":"娃"},{"confidence":0,"end_time":2310,"start_time":2110,"text":"白"},{"confidence":0,"end_time":2470,"start_time":2310,"text":"板"}]}]}}同理如果你把上下文里的名称换成「西喔白板」或者正确的「希沃白板」,识别结果也会跟着对应调整,完全不需要额外训练模型,只要修改提示词就能适配不同业务场景,对于有大量专有名词的语音识别需求来说开发成本极低,日常做语音控制、录音转写之类的功能非常好用。

整个 Program.cs 代码如下

using System.Text;using System.Text.Encodings.Web;using System.Text.Json;using System.Text.Json.Nodes;using System.Text.Json.Serialization;

using VolcEngineSdk.OpenSpeech.Contexts;

var keyFile = @"C:\lindexi\Work\Speech.txt";var key = File.ReadAllText(keyFile).Trim();

/* 豆包录音文件识别模型1.0 - volc.bigasr.auc 豆包录音文件识别模型2.0 - volc.seedasr.auc */

using var httpClient = new HttpClient();httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Key", key);var resourceId = "volc.seedasr.auc";httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Resource-Id", resourceId);

var requestId = Guid.NewGuid().ToString();httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Request-Id", requestId);// 发包序号,固定值,-1httpClient.DefaultRequestHeaders.TryAddWithoutValidation("X-Api-Sequence", "-1");

var url = "https://openspeech.bytedance.com/api/v3/auc/bigmodel/submit";

var corpusContext = new CorpusContext(){ ContextData = { new CorpusContextData() { Text = "你是一个应用软件使用助手,你可以调用工具帮助用户实现电脑的操作", }, new CorpusContextData() { Text = "现在电脑上安装的程序有:哔哩哔哩直播姬、软媒魔方、腾讯课堂、微软OfficePLUS、西娃白板、向日葵、小狼毫输入法、QQ影音。请选择将打开的应用程序", }, }};

var corpusContextJson = JsonSerializer.Serialize(corpusContext, new JsonSerializerOptions(){ WriteIndented = true, Encoder = JavaScriptEncoder.UnsafeRelaxedJsonEscaping,});var asrRequest = new AsrRequest(){ User = new UserMeta() { Uid = "abc" }, Audio = new AudioMeta() { // - 打开西瓜白板录音.mp3: https://pro-en-ali-pub.en5static.com/easinote5_public/uwixjonmhhqjjhnohwvvwyyhvzphihhh.mp3 会被识别为 “打开西瓜白榜”,但可以提示正为 “西瓜白板”,但就是很难被正确识别为 “希沃白板” // - 打开西wa白板录音.mp3: https://pro-en-ali-pub.en5static.com/easinote5_public/uwixkwvzhhqjjhnohwvyzzwnykhhihhh.mp3 可用提示词 “西娃白板” 或 “西喔白板” 来进行掰歪,但也可以用正确的 “希沃白板”定正

Url = "https://pro-en-ali-pub.en5static.com/easinote5_public/uwixkwvzhhqjjhnohwvyzzwnykhhihhh.mp3", Format = "mp3", }, Request = new RequestMeta() { ModelName = "bigmodel", Corpus = new CorpusMeta() { Context = corpusContextJson, } }};

var jsonString = JsonSerializer.Serialize(asrRequest, new JsonSerializerOptions(){ Encoder = JavaScriptEncoder.UnsafeRelaxedJsonEscaping,});

using var httpResponseMessage = await httpClient.PostAsync(url, new StringContent(jsonString, Encoding.UTF8, "application/json"));

var responseText = await httpResponseMessage.Content.ReadAsStringAsync();_ = responseText; // 这是一个空内容

var queryUrl = "https://openspeech.bytedance.com/api/v3/auc/bigmodel/query";

while (true){ try { using var queryHttpResponseMessage = await httpClient.PostAsync(queryUrl, new StringContent("{}", Encoding.UTF8, "application/json")); var queryHttpResponseText = await queryHttpResponseMessage.Content.ReadAsStringAsync();

// {"audio_info":{"duration":4223},"result":{"additions":{"duration":"4223"},"text":"打开西瓜白榜。","utterances":[{"end_time":2890,"start_time":1450,"text":"打开西瓜白榜。","words":[{"confidence":0,"end_time":1690,"start_time":1450,"text":"打"},{"confidence":0,"end_time":1890,"start_time":1690,"text":"开"},{"confidence":0,"end_time":2170,"start_time":1930,"text":"西"},{"confidence":0,"end_time":2410,"start_time":2170,"text":"瓜"},{"confidence":0,"end_time":2610,"start_time":2570,"text":"白"},{"confidence":0,"end_time":2890,"start_time":2850,"text":"榜"}]}]}} string statusCodeText = string.Empty; if (queryHttpResponseMessage.Headers.TryGetValues("X-Api-Status-Code", out var statusCode) && statusCode is string[] { Length: > 0 } codeArray) { statusCodeText = codeArray[0]; }

Console.WriteLine($"[{statusCodeText}] {queryHttpResponseText}");

var speechRecognitionResponse = JsonSerializer.Deserialize<SpeechRecognitionResponse>(queryHttpResponseText); if (!string.IsNullOrEmpty(speechRecognitionResponse?.Result?.Text)) { break; } } catch (Exception e) { Console.WriteLine(e); }}本文代码放在 github 和 gitee 上,可以使用如下命令行拉取代码。我整个代码仓库比较庞大,使用以下命令行可以进行部分拉取,拉取速度比较快

先创建一个空文件夹,接着使用命令行 cd 命令进入此空文件夹,在命令行里面输入以下代码,即可获取到本文的代码

git initgit remote add origin https://gitee.com/lindexi/lindexi_gd.gitgit pull origin 0ac18baa912e060d79b4a10a5f77f0c432d954c4以上使用的是国内的 gitee 的源,如果 gitee 不能访问,请替换为 github 的源。请在命令行继续输入以下代码,将 gitee 源换成 github 源进行拉取代码。如果依然拉取不到代码,可以发邮件向我要代码

git remote remove origingit remote add origin https://github.com/lindexi/lindexi_gd.gitgit pull origin 0ac18baa912e060d79b4a10a5f77f0c432d954c4获取代码之后,进入 SemanticKernelSamples/ChederehemculerlairLujurraqeldawjear 文件夹,即可获取到源代码

更多技术博客,请参阅 博客导航

本作品采用 知识共享署名-非商业性使用-相同方式共享 4.0 国际许可协议 进行许可。 欢迎转载、使用、重新发布,但务必保留文章署名 林德熙 (包含链接: https://blog.lindexi.com ),不得用于商业目的,基于本文修改后的作品务必以相同的许可发布。如有任何疑问,请与我 联系。